I am Assistant Professor in Computer Science and Software Engineering at McMaster University. Prior to McMaster, I was at Cornell University where I got my PhD in Computing and Information Science Department. I have been fortunate to be advised and mentored by Dr. Jeffrey M. Rzeszotarski, Dr. David Mimno and Dr. Claire Cardie. My research lies at the intersection of Human-Computer Interaction (HCI) and Artificial Intelligence (AI) and has been generously funded by multi-year Bloomberg Data Science Fellowship. I design, build and deploy interactive Machine Teaching (MT) systems that enable AI non-experts like journalists, data analysts, and healthcare professionals to customize, adopt and work efficiently alongside AI tools. My research lab focuses on building AI systems that empower people to achieve more, think better and extend the boundaries of their capabilities and imagination.

I am currently hiring! I have fully funded positions available in my lab for stellar PhD and Masters students to work on exciting new challenges at the intersection of AI and HCI! Listed below are some of my lab’s past research projects. Interested students please email me your CV and a brief summary of your research interests at mishrs23 at mcmaster dot ca.

Research Interests

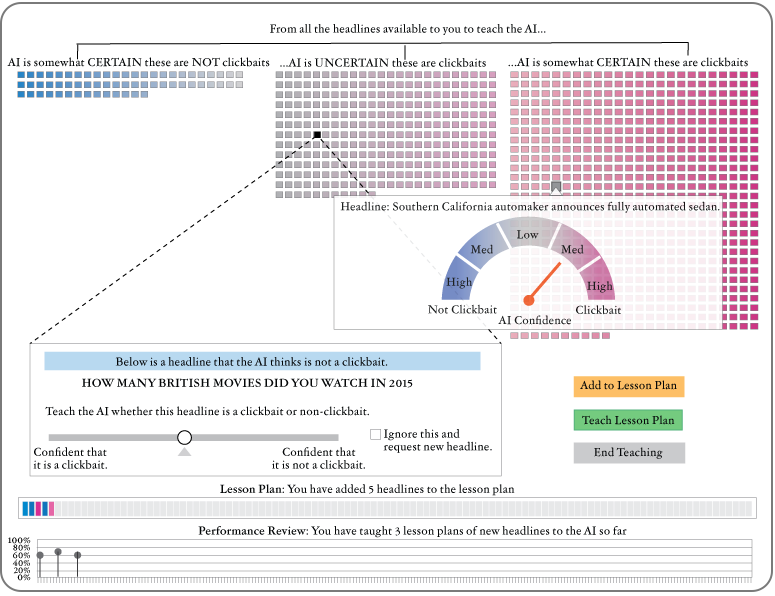

Human Factors in Machine Teaching

Machine Teaching (MT) is not just a statistical process but also a Sensemaking process. The teachers in MT environments; 1) first form a perception about the learner either through prior knowledge, or prior use, 2) use the system to optimize and adapt the learner, 3) perceive it’s performance by issuing a test, and 4) continue to engage in teaching until satisfactory results are obtained. In this research, I modelled how human expectations of the machine learner impact their feedback and their perceptions of the learning process impact the teaching outcome. Deploying this system with actual users sheds light on the role of stakeholders and models in the development of teachable systems. We discovered that when teaching high performing models (e.g. Large Language Models), MT systems need to manage user expectations for effective feedback.

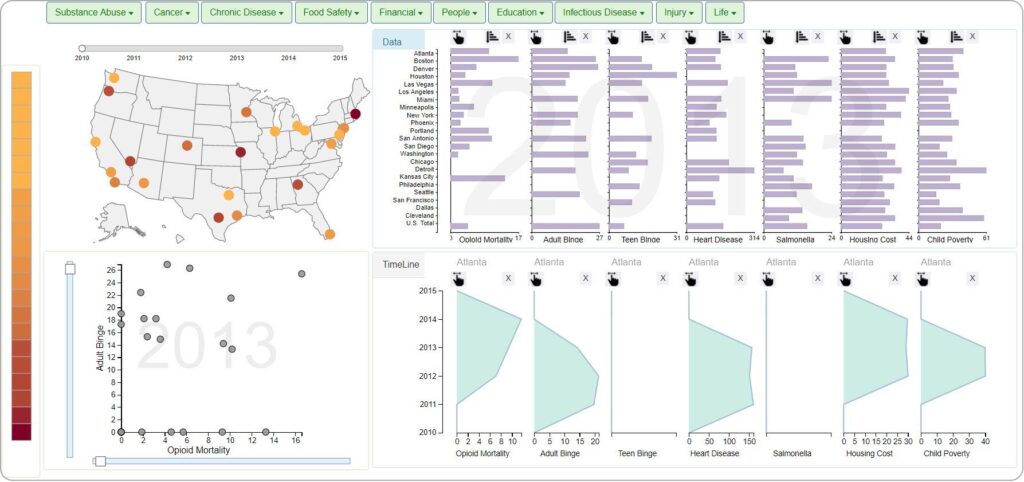

Leveraging Human Concepts in Visual Data Analysis

While machine’s make decisions using statistical representations(pixels of image), people use data concepts (e.g, red fire hydrant) to make sense of the world. This tool demonstrates a novel interaction framework that helps users to share their concepts and hypothesis with the system. Experimental evaluations with this tool demonstrate how encouraging analysts to share their conceptual models with the system helps resolve disagreements effectively within an interactive data analysis ecosystem.

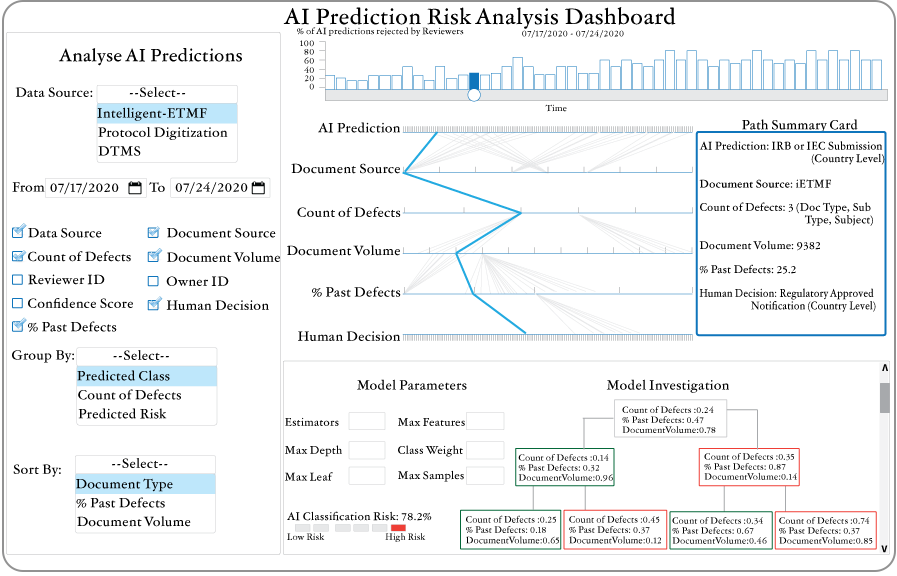

AI Explanability for Clinical Trial Practitioners

Clinical Trials of new drugs are executed at massive scale and FDA requires that the trials are well documented and accurately organized in order for the drug to be approved. In this research, I worked to improve how Clinical Trial Officers and AI collaborate to successfully organize thousands of clinical documents (>50,000) for FDA inspections of critical vaccines like Aztrazenca. I designed this Risk Analysis Dashboard to help over 5000 Clinical Trial Officers analyze the risks associated with each AI prediction, use concept-based explanations to review algorithmic decisions, and identify documents that might be incorrectly classified.

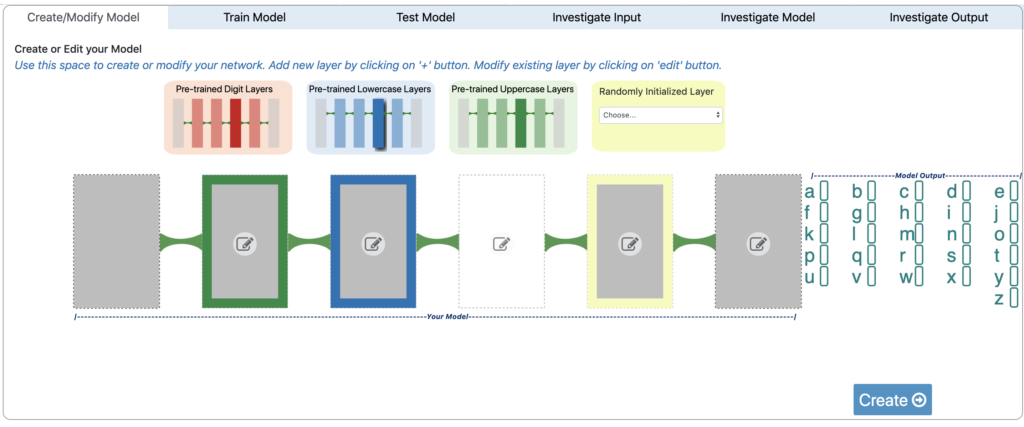

Interactive Transfer Learning

Transfer Learning is a technique of incorporating the knowledge of one task into another task in order to improve performance. I designed and built this interactive machine learning tool to explore how ML non-experts can leverage models curated by experts to build interesting models of their own. The extensive user evaluation of the systems demonstrates how perceptions of machine learning process play a critical role.

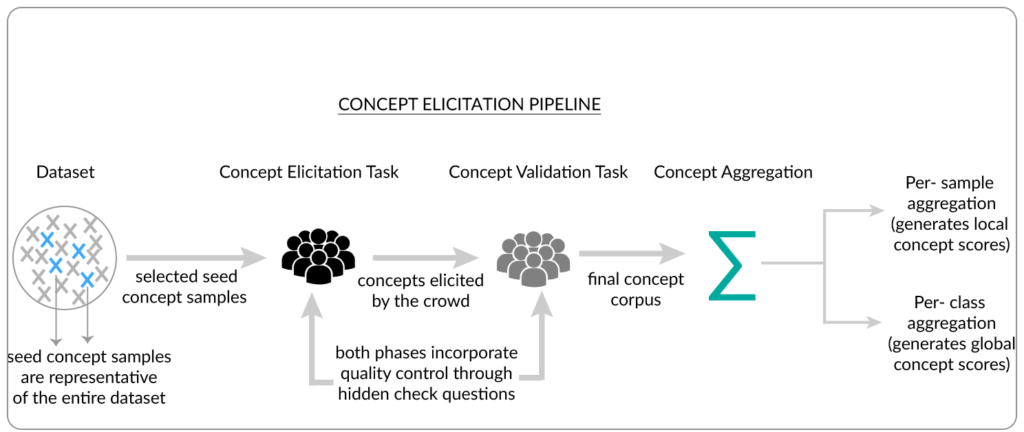

Crowdsourcing Concept based Explanations

Concepts are abstract representations that humans use to make sense of their world, and distinguish meaningfully between two entities (distinguishing a tiger and leopard using the concepts of stripe or spots). In this research, I propose a concept elicitation technique using crowdsourcing to design concept based explanations that improve the interpretability of black-boxed ML models. A thorough evaluation of these designs shows that the explanations with highest entropy aren’t necessarily most useful for AI non-experts.

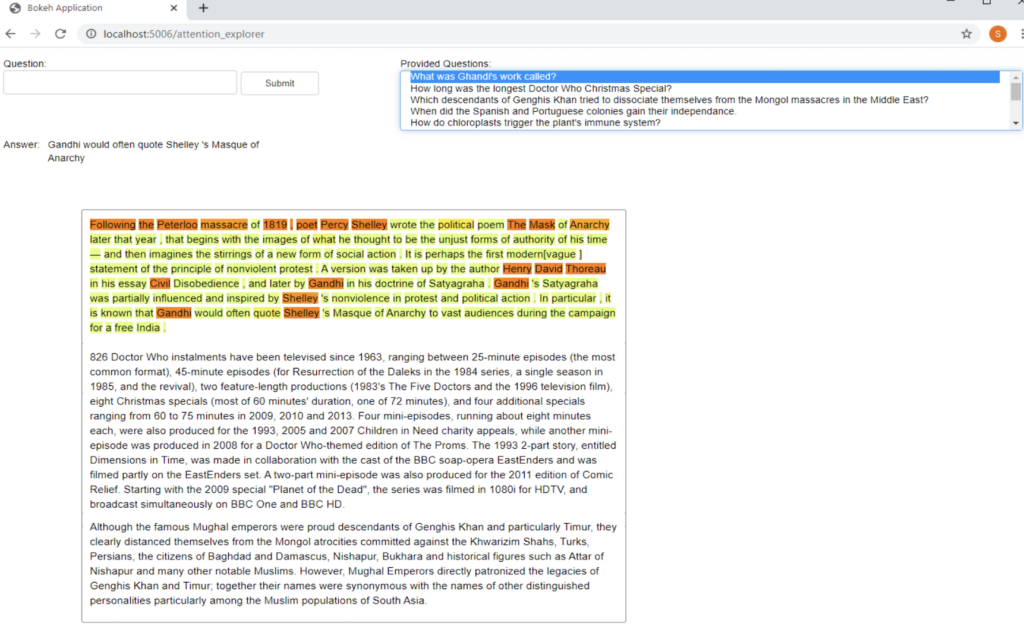

Explaining Attention: A Human-centered approach

Attention mechanism is an important tool used in Natural Language Processing to improve statistical performance on various tasks like text classification, machine translation and question answering. However, the machine view and human view of attention have very different meanings. In this research, I explore how attention mechanism can lead to confusing interpretations of machine decisions. I proposed a human-centered approach to understanding and using attention as a potential explanation.

Gestural Interactions with Data

This fun installation I designed, engages visitors of science museum in data exploration using full-body interactions. I explored the design of crafting engaging Human-Data Interaction experience for the visitors of Discovery Place Science Museum, Charlotte, NC, using a fully functional prototype that employs strategies in instrumenting the floor, forcing collaboration, implementing multiple body movements to control the same effect; and, using the visitors’ silhouette. This work informs the design space of embodied interactions with data and is part of the foundational research done for the book featured in the book Data Through Movement: Designing Embodied Human-Data Interaction for Informal Learning.

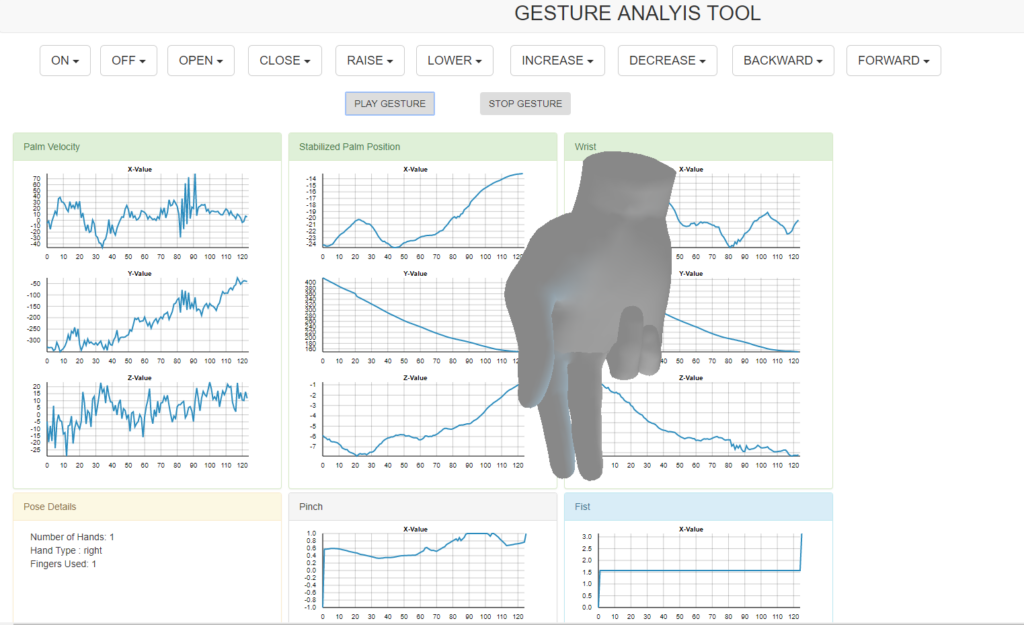

Gesture Analysis Tool for Interaction Designers

As more designers seek to incorporate gestural interactions into their systems, they face significant hurdles in designing optimal gestures that are both human friendly and machine interpretable. I designed and built this tool to help interaction designers analyze gestures that are gathered using context specific elicitation techniques. The visual overlay of a given hand gesture with its decomposed machine interpretable components, highlights the differences between gestures that are conceptually similar but statistically different. Visual analysis tools of complex human gestures are useful to dissect why a system might be behaving in a undesirable ways, and design interventions.

Pedestrian Detection System

I was part of a team that designed and built pedestrian detection system to assist drivers on the road. I was part of this research during the “pre-Deep Learning” boom, so we handcrafted the features for different pedestrians using good old fashioned Computer Vision techniques, and meticulously optimized them on application specific hardware. We later experimented with deep learning features that proved to be more accurate, but less interpretable. Trade-off between accuracy and interpretability is a frequent design choice to be made when deploying products to the market with deep learning models. (Product Website: Mando, Picture Credit: Dalal and Triggs)

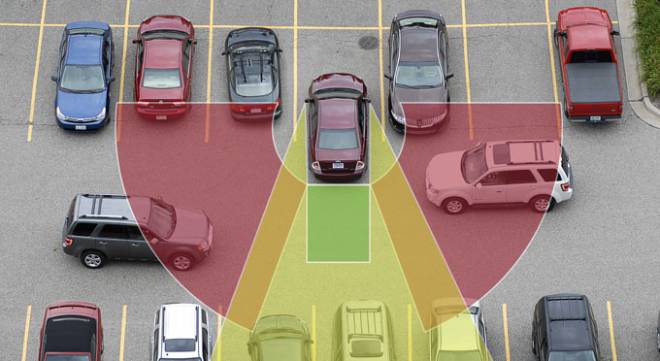

Cross-Traffic Alert System

I led the team that designed the algorithms for Cross-Traffic Alert systems. Based on the input data from LIDAR sensors (a sensor popularly used in robotics and autonomous cars), the system detects any traffic approaching from the rear end of the car, and informs the driver to avoid potential collision. The algorithm was developed on TI Embedded Vision Engine, and incorporates fixed-point computations for accelerated real-time computations at speed of 100 FPS. (Picture Credit and Product Website: Continental Automative)

Publications

- Human Expectations and Perceptions of Learning in Machine Teaching. Mishra S., Rzeszotarski, J. (2023)

ACM User Modeling, Adaptation and Personalization (ACM UMAP) - Enhancing thoracic disease detection using chest x-rays from PubMed Central Open Access. Lin, M., Hou, B., Mishra, S., Yao, T., Huo, Y. Yang, Q., Wang, F., Shih, G., Peng, Y. (2023) Journal of Computers in Biology and Medicine

- Teachable Filters: A framework of Interactive Machine Teaching for Information Filtering. Mishra S., Ryerkerk M., Lockerman Y., Eis D. Rzeszotarski J. (2023) [Coming Soon]

- Designing Interactive Transfer Learning Tools for ML Non-Experts. Mishra. S., Rzeszotarski, J. (2021)

ACM Conference on Human Factors in Computing Systems (ACM CHI) Best Paper Award - Crowdsourcing and Evaluating Concept-driven Explanations of Machine Learning Models. Mishra. S., Rzeszotarski, J. (2021)

Proceedings of the ACM on Human Computer Interaction (ACM CSCW) - Towards Natural Language Interactions for Qualitative Text Analysis. Mishra. S., Rzeszotarski, J. (2020)

Workshop on Artificial Intelligence for HCI: A Modern Approach ACM Conference on Human Factors in Computing Systems (ACM CHI). - Concept-Driven Visual Analytics: an Exploratory Study of Model- and Hypothesis-Based Reasoning with Visualizations.

Choi, I., Childers, T., Raveendranath, N., Mishra, S., Harris, K, Reda, K. (2019)

ACM Conference on Human Factors in Computing Systems (ACM CHI) - Towards Concept Driven Visual Analytics. Choi, I., Mishra, S., Harris, K., Raveendranath, N., Childers, T., Reda, K. (2018)

IEEE Visualization Conference (IEEE VIS) – Video - Full Body Interaction Beyond Fun : Engaging Museum Visitors in Human-Data Interaction. Mishra, S., Cafaro, F. (2018)

ACM Conference on Tangible, Embedded, and Embodied Interaction (ACM TEI) - A Complete Pedestrian Detection Framework. Mishra, S., Lad, R., Malhotra V. (2013)

Global Tech Congress, Mando South Korea – (Proprietary of Mando, South Korea) - Maze solving algorithms for Micro Mouse. Mishra, S., Bande, P. (2008)

IEEE Conference on Signal Image Technology and Internet Based Systems (IEEE SITIS) - Mobile controlled smart device for multiple device regulation. Tohan, N., Mishra, S., Bande, P., Henry, R. (2009)

International Conference on Recent Advances in Material Processing Technology (RAMPT) - Advanced Algorithms for Micro Mouse Maze Solving. Mishra, S., Bande, P. (2009)

International Conference on Embedded Systems & Applications (ESA)